What they mean is that an image generator of this type can be manipulated naturally, simply by telling it what to do. We extend these findings to show that manipulating visual concepts through language is now within reach. Image GPT showed that the same type of neural network can also be used to generate images with high fidelity. GPT-3 showed that language can be used to instruct a large neural network to perform a variety of text generation tasks. In this case the agent uses the language understanding and context provided by GPT-3 and its underlying structure to create a plausible image that matches a prompt. Turning text into images has been done for years by AI agents, with varying but steadily increasing success.

But it doesn’t output garbage or serious grammatical errors, which makes it suitable for a variety of tasks, as startups and researchers are exploring right now.ĭALL-E (a combination of Dali and WALL-E) takes this concept one further. Some of these attempts will be better than others indeed, some will be barely coherent while others may be nearly indistinguishable from something written by a human.

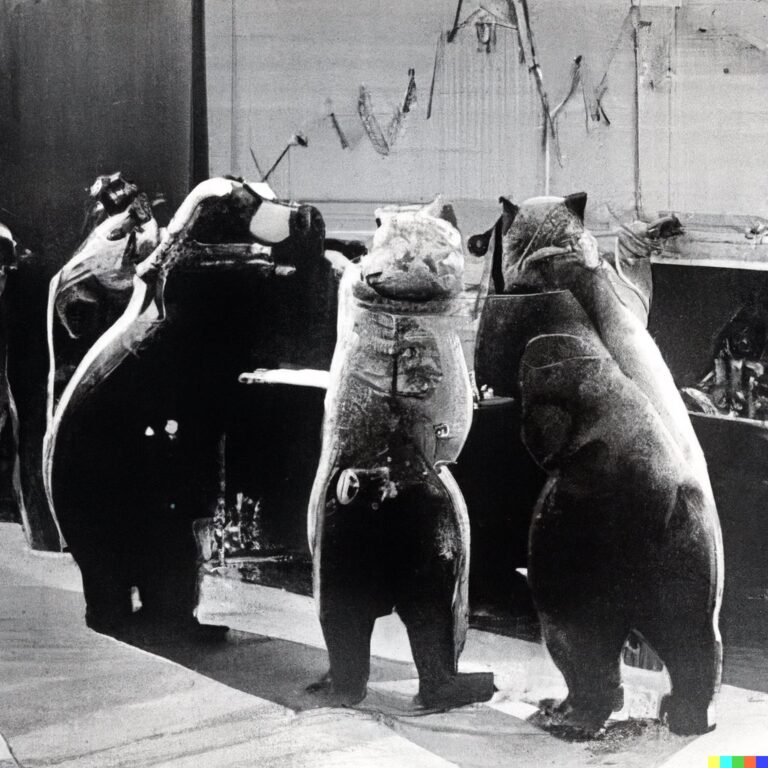

So if you say “a story about a child who finds a witch in the woods,” it will try to write one - and if you hit the button again, it will write it again, differently. What researchers created with GPT-3 was an AI that, given a prompt, would attempt to generate a plausible version of what it describes. OpenAI’s latest strange yet fascinating creation is DALL-E, which by way of hasty summary might be called “GPT-3 for images.” It creates illustrations, photos, renders or whatever method you prefer, of anything you can intelligibly describe, from “a cat wearing a bow tie” to “a daikon radish in a tutu walking a dog.” But don’t write stock photography and illustration’s obituaries just yet.Īs usual, OpenAI’s description of its invention is quite readable and not overly technical.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed